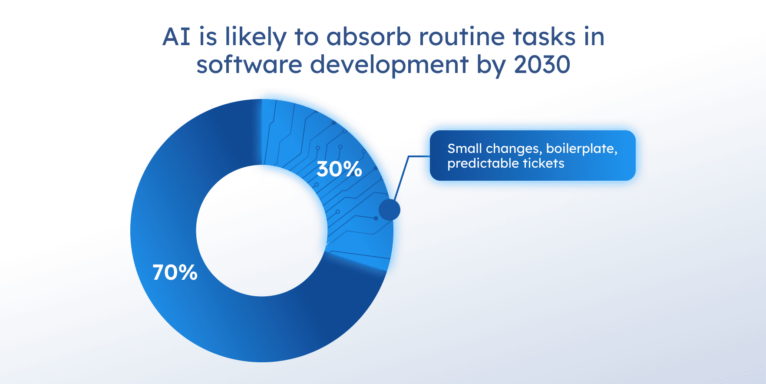

Will AI replace programmers? By 2030, AI is likely to absorb 20–30% of routine implementation work and replace junior software developers whose job is mostly small changes, boilerplate, and predictable tickets. Our claim is based on current public research and adoption trends.

The McKinsey Global Institute’s long-standing forecast states that generative AI could automate activities accounting for up to 30% of all work hours across the economy. Gartner points in the same direction from the adoption side: by 2030, CIOs expect 75% of IT work to be done by humans using AI, with 25% done by AI alone. Taken together, these signals suggest that AI-assisted development will only become more common, and that routine implementation work is likely to shrink as a share of the job.

We have already seen AI take over a meaningful share of routine work in other industries. Customer service is a clear example. Salesforce reports that AI resolved 30% of service cases in 2025, with that figure expected to reach 50% by 2027. Gartner goes further and predicts that by 2029 agentic AI will autonomously resolve 80% of common customer service issues. That does not mean software development will follow the same curve, but it does show that AI can automate a real share of work once tasks become structured, repetitive, and easy to verify.

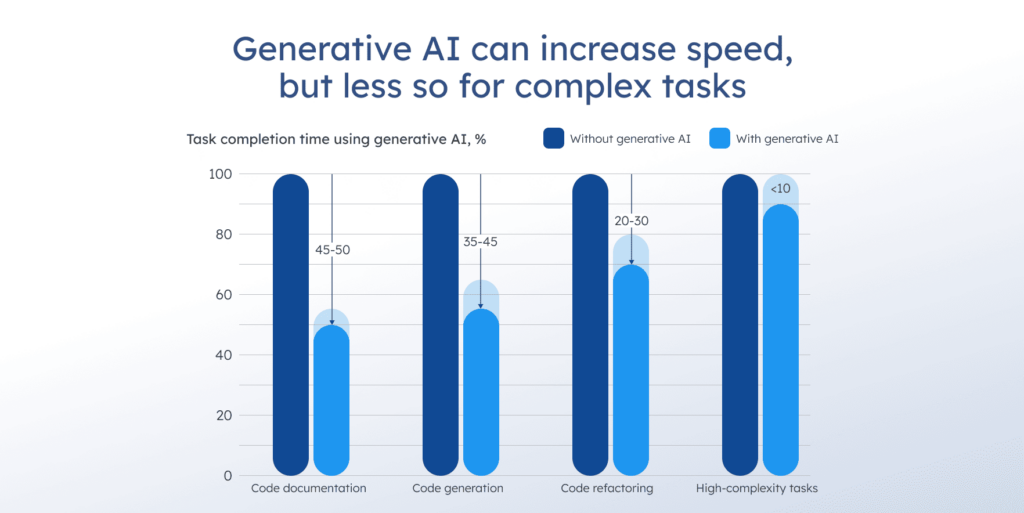

Task completion time with and without generative AI. Source

These tendencies haven’t removed the need for software engineering across the board. The U.S. Bureau of Labor Statistics still projects software developer employment to grow by 17.9% by 2033. That points to a change in task mix, not a simple wipeout of software roles.

It now makes more sense to treat AI replacing software engineers as task displacement first, role redesign second, and job elimination only in particular cases. Engineers who define system design, run reviews, and own code quality, reliability, and security in production do not face that pressure in the same way. Their work becomes harder to replace and more valuable to teams.

This article looks at what AI can already do, what is likely to change next, what remains uncertain, and which skills and company-level choices matter most.

What can AI already do in software engineering?

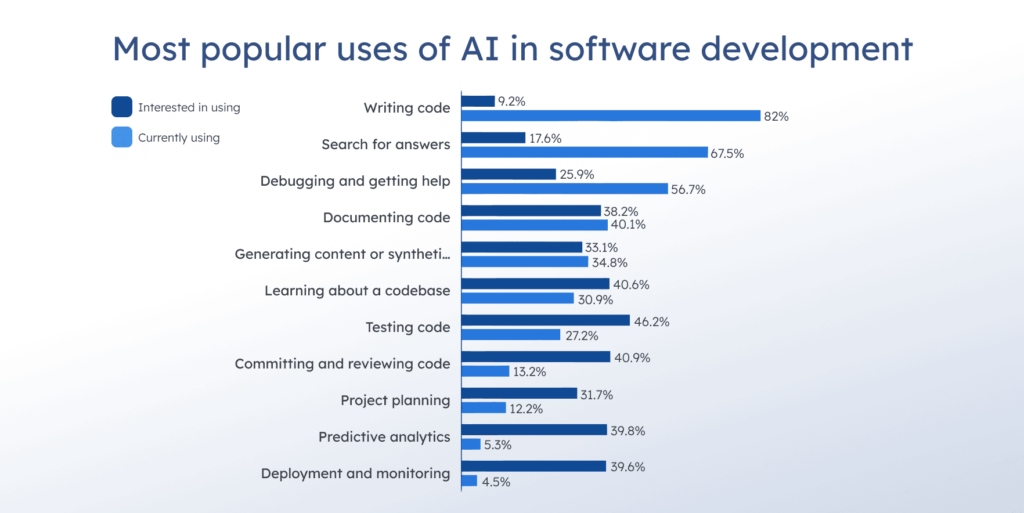

AI performs best when intent is clear and the system context is available, but struggles when requirements are ambiguous, technical rules are missing, or a change carries real production risk. The 2025 Stack Overflow Developer Survey shows that 84% of respondents use or plan to use AI in their development process, and 51% of professional developers use AI tools daily. That makes AI part of everyday software development already, even if its strengths remain uneven. With that baseline in mind, here’s what AI can already do today.

Most popular uses of AI in software development. Source

First-draft coding and boilerplate

A big part of this shift comes from generative AI development tools that serve as a fast first pass for routine programming. They can accurately generate scaffolding for features, CRUD endpoints, API wrappers, test stubs, documentation, and migration scripts, especially when the interface is clear and examples already exist in the codebase.

In a controlled GitHub Copilot experiment, developers completed a JavaScript HTTP server task 55% faster with Copilot than without it. It doesn’t mean developers can code hands-free, but it does mean they spend less time on repetitive implementation and can focus on integration, edge cases, and validation.

Code review assistance and refactoring suggestions

AI can support code review much like strong developer tooling. It can flag likely issues, suggest refactors, and keep style consistent across a codebase. It sounds promising, especially when it also helps reviewers move faster, summarize large diffs, and propose tests to cover the code change.

McKinsey estimates generative AI could raise software engineering productivity by 20–45%, mainly because it reduces time spent on tasks such as drafting, refactoring, and parts of root-cause analysis.

But the hidden rock here is context. The AI suggestions may look correct, but miss local assumptions, edge cases, or existing constraints. So, teams still need strong human review for correctness, security risks, and design trade-offs. There’s also a governance angle. As AI-generated code becomes more common, licensing and code-cloning concerns can also arise, especially if developers paste large blocks without checking their provenance.

Faster onboarding and searching in large codebases

One of the most practical gains is speed in unfamiliar systems. AI tools can summarize modules, explain what a service does, show where a function is used, and suggest where to make changes, especially when documentation is thin and the codebase is large.

That matters because the slow part of software development isn’t writing code. It’s locating the right component, understanding downstream effects, and avoiding accidental breakage. Developers use AI heavily in their daily work, but verification still takes time: 66% of developers cite that AI outputs are almost right but not quite, and 45.2% say debugging AI-generated code takes longer.

In other words, AI accelerates orientation and search, while engineers still have to review and test to keep changes safe for production.

Debugging support for known failure patterns

AI helps when the issue is familiar, and the signals are already in front of you. It can summarize logs, point to likely error sources, and suggest what telemetry to check next. AI can also flag suspicious config changes, but it still does not replace the final decision on whether to roll back, because that depends on production context, system dependencies, and blast radius.

Test drafting for routine behavior

AI can draft unit tests and basic integration checks when the expected behavior is clear. It can generate test scaffolds, list edge cases, and propose negative tests for inputs and validation. Even so, tests still need human ownership, because shallow checks can pass while missing the invariant that actually matters in production.

Documentation and runbooks that stay close to code changes

AI is useful for turning diffs into readable summaries, release notes, and first-pass runbooks for support. This is where it saves time for teams with very sparse documentation and frequent changes. It still needs review, or it turns into clean text that no longer matches the system after a few iterations.

What changes are likely in the near future?

The near future of software development is less about whether artificial intelligence can write code and more about how teams control the work it produces. AI-generated code that is almost correct still fails in production, so the real question becomes process, validation, and ownership.

AI agents that complete multi-step tickets

We are moving from autocomplete toward AI-assisted agents that can plan a change, modify code, generate tests, and open a pull request. This is where the debate over whether AI will replace software engineers gets louder, because the workflow starts to look end-to-end.

In practice, it works best when the scope is narrow and the requirements are explicit: small feature additions, known patterns, strong test suites, and clean interfaces. But it breaks down when a requested code or product change depends on domain judgment, unclear specs, legacy constraints, or risky dependencies, because an agent can produce plausible outputs that do not match the system’s actual rules.

Gartner predicts that by the end of 2026, 40% of enterprise apps will run task-specific agents, up from just under 5% in 2025. Tools already do this in production for clean, explicit work. But the other 60% still require human gatekeeping in the loop through code review, test coverage, and release controls, especially when the change touches security risks or customer-facing behavior.

The shift from writing code to operating code

As tools improve, engineers spend less time typing and more time running a controlled loop: specify constraints, generate a draft, validate, and ship safely. The work shifts toward better specs, stronger tests, tighter acceptance criteria, faster validation in CI/CD, and post-release monitoring.

This is also where the junior job market gets pressure: if AI-assisted coding handles routine programming faster, entry-level roles focused mainly on implementation become easier to replace, while roles that own quality, review, and production outcomes become more valuable.

Stanford Digital Economy Lab’s 2025 analysis reports that workers aged 22–25 saw a 6% employment decline in the most AI-exposed occupations from late 2022 to September 2025, while older workers saw 6–9% growth. This suggests early-career roles feel the pressure first, even while overall employment grows.

Security and compliance become the bottleneck

As more code is generated, the attack surface grows unless review and testing keep up. That is the trade: faster output versus higher risk of silent regressions, insecure defaults, and copied patterns that do not fit your environment. Veracode’s 2025 GenAI Code Security Report found 45% of AI-generated code samples failed security tests and introduced vulnerabilities featured in the OWASP Top 10 list, and Java had the highest security failure rate at 72%.

So now, can AI replace software engineers? For many IT companies, that is still the wrong question. The more relevant one is who remains accountable for the code that ships.

Verification becomes a bigger share of the work

The extra load shows up in real effort, too. In 2026, 38% of developers say reviewing AI-generated code takes more effort than reviewing human-written code (only 27% find it easier). When AI drafts fly out faster, teams end up pouring more hours into validation, tests, and fixes to catch those silent regressions.

The safest path isn’t raw coding speed anymore. It’s learning to spot flaws fast, write bulletproof tests, and own the review that keeps bad code from reaching production.

What remains uncertain and why forecasts differ

The loudest takes on whether software developers will be replaced by AI usually assume that better models automatically equal reliable automation. Real software engineering does not work that way, and the technical gap is only half the story. So what, exactly, remains uncertain?

Complex tasks, unclear specs, messy codebases

AI-assisted tools perform best when the work is bounded, and the requirements are clear. Many real tickets are the opposite. Specs are incomplete, edge cases live in production history, and the codebase has coupling that is not written down anywhere.

In those conditions, AI can generate code that appears reasonable but fails to meet the system’s real constraints, especially when changes affect multiple services, data contracts, or long-lived business logic. This is one reason forecasts differ: some teams work in clean, modular systems with strong tests, while others manage legacy environments where verification is slow, and mistakes are costly.

Forecasts are split because the evidence points in two directions at once. AI works well on bounded tasks, yet a 2025 randomized trial found that experienced open-source developers took 19% longer to complete real tasks when AI tools were allowed, which shows that automation can still add overhead in messy, high-context work.

Accountability and liability

In regulated environments, the key question is not whether an LLM can write code, but who signs off on the risk. Finance, healthcare, automotive, and aerospace operate with strict requirements for audit trails, safety, and failure handling. AI-generated code can be part of the workflow, but it does not remove accountability.

Across these sectors, AI adoption is slowed not only by technical limitations but also by liability concerns. Gartner expects AI-related legal claims tied to insufficient guardrails to rise sharply by the end of 2026, which reinforces the point that governance, auditability, and human sign-off remain bottlenecks in high-risk environments.

Human factors

Even when the tooling works, it can’t guarantee productivity. Output depends on timing, task fit, team habits, and skill level. Some engineers use AI-assisted coding like a strong pair programmer and get faster. Others lose time context-switching, reviewing low-quality suggestions, or debugging incorrect changes. The same tool can help one team and frustrate another. The difference usually comes down to process maturity and how much of the work is validated by rigorous tests and review.

Who is most at risk?

Highest exposure. Work that looks like a template

Junior roles are most exposed when the day-to-day job involves repetitive tasks. That includes boilerplate changes, CRUD work, small bug fixes with obvious causes, and tickets where the right answer already exists in the codebase. In these cases, large language models can draft code quickly, and the remaining work becomes review and validation. This is also where the matter of whether AI is replacing developers shows up in hiring: companies can raise output per senior engineer and need fewer implementers.

Repetitive QA scripting can also be exposed when requirements are stable, and the test pattern repeats. AI can draft test scaffolds and basic checks fast, which reduces the volume of manual scripting work. QA does not disappear, but the bar shifts toward higher-value testing: coverage strategy, risk-based testing, and failure analysis.

Lower exposure. Roles tied to production ownership

Engineers are harder to replace when they own outcomes that tools cannot guarantee. For example, senior architect-level engineers spend time on system design, trade-offs, and long-term constraints. Security engineers own threat models, abuse cases, and the cost of being wrong. Platform and reliability engineers deal with failure modes, deployment safety, and incident response under incomplete information. Domain-heavy product engineers translate messy business reality into software behavior and decide what “correct” means. These roles still use AI tools, but the tool assists and doesn’t take responsibility.

AI won’t take your job, but the basics

I don’t think AI is going to kill off every engineering job by next week, but it does put pressure on entry-level roles. I see this in my own work constantly.

These tools are fast, sure, but the machine has no sense of consequence. It doesn’t care if a production environment goes dark at midnight because it has zero skin in the game. It lacks that gut feeling you get when you know a specific change is risky.

The teams that win right now aren’t the ones pumping out the most lines of code. It’s the ones who can actually prove their changes are secure and won’t break the audit trail. Because the AI misses context, the burden of verification actually falls harder on us. So, we have to handle the big picture and the system architecture. That’s the only way to catch a hallucination before it hits production and breaks everything.

What do engineers need in the AI-driven era?

Disciplines that still matter

As the machine takes over the boilerplate, your value shifts entirely to mastering the complex, messy realities of operating software in production.

System design

The real work here is that you take a messy business requirement and fracture it into hard boundaries, data flows, and failure modes. Anyone can generate code now, but you have to explain the trade-offs without hand-waving: why a queue here, why a synchronous call there, what happens when a dependency slows down, and how you prevent cascading failures. When AI writes fast, architecture mistakes get shipped faster too, and they cost more later.

API contracts and interface thinking

In any distributed system, the API contract is exactly where teams break each other. Unclear payloads, undocumented rules, versioning that wasn’t planned, temporary fields that become permanent—this is what kills velocity. Engineers who create simple, reliable interfaces help keep systems running smoothly. That skill doesn’t get automated away; it gets priced higher as more code gets generated and glued together.

Observability and incident thinking

A lot of engineering work happens after the merge. If you can’t explain what your change did in production, you’re guessing. Knowing how to place logs, metrics, traces, and alerts means you’re building software that can be operated.

Security basics as part of normal development

In many organizations, security will be the gating constraint, because AI-generated code increases volume and variation, and weak review means more mistakes slip through. Engineers who understand auth boundaries, input validation, dependency risk, secrets, and safe defaults become the people who keep the ship afloat.

Performance reasoning and distributed debugging

The hardest bugs are found when the growing system behaves differently under load. Dealing with timeouts, retries, queues, caching, race conditions, memory leaks, slow queries, backpressure, is where people earn their paycheck. AI can help you search and propose fixes, but you still need someone who can reason about the system as a whole.

Tips for working with AI tools

Write specs that don’t leave room for silent failure

Most wrong AI code stories start with weak requirements. That’s why you need good specs to describe the right steps and define constraints: what must never change, what criteria for correct code one should use, what needs to be logged, what should happen on errors, what the performance ceiling is, and what the privacy boundary is.

Treat AI output like a junior contribution

You must review diffs for intent, look for subtle breakages: off-by-one behavior, missing null handling, incorrect permissions, wrong assumptions about data shape, dependency misuse, fragile parsing, and unhandled failure modes.

Prove it with tests and small, safe experiments

Test your changes with real checks and low-risk trials. If you can’t show it works, you don’t understand how it works. Write tests that cover cases that users notice first, like wrong totals, broken permissions, duplicates, missing events, silent data loss, or slowdowns, then run the full suite locally and in CI, hitting production killers like empty inputs, time zones, rounding errors, retries, partial failures, duplicated requests, and out-of-order events.

Operational readiness

More AI-assisted coding often means more changes landing faster. That raises the need for guardrails: CI gates, code owners, review rules, security checks, release discipline, and rollback paths. Engineers who know how to ship safely become the ones teams trust.

How should companies prepare for AI adoption?

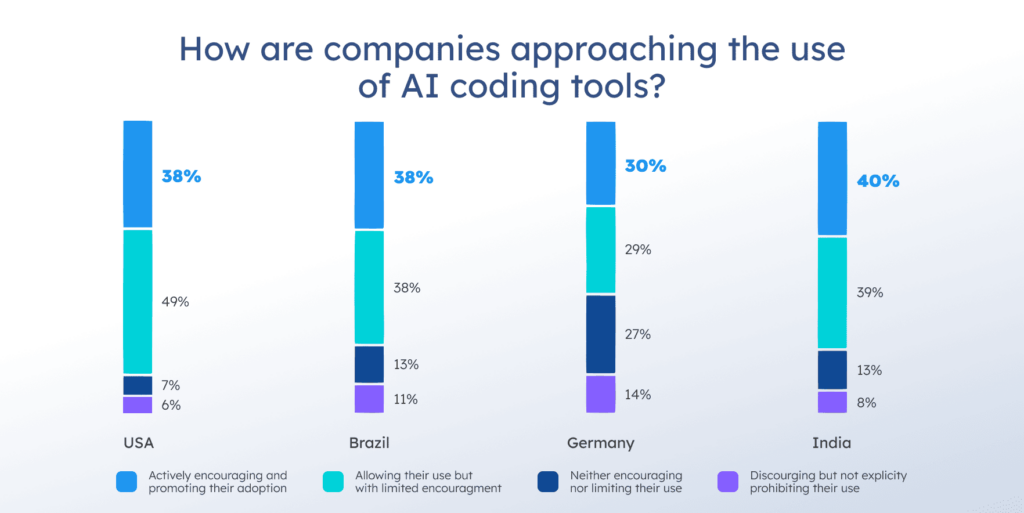

Companies aren’t sitting on the sidelines. GitHub’s survey asked developers how their companies actually approach AI coding tools, and the answers are telling. AI isn’t some side experiment anymore, and it’s quickly turning into official company policy. See what companies can do to prepare for the shift.

How respondents say their companies are approaching the use of AI coding tools. Source

Create an adoption plan to reduce risk

AI-assisted coding works best when the rules are boring and clear. Before you roll it out across software development, decide where it is allowed to touch production code and where it stays a drafting tool. Let’s see what steps you can take for that.

- Set scope by risk level. Define a green zone, for example, boilerplate, internal tooling, docs, test stubs, and small refactors. The yellow zone may include customer-facing features with strong test coverage. The red zone should cover payments, authentication, permissions, encryption, medical/financial logic, and anything that could leak data or violate compliance.

- Put quality gates in writing. No merges without tests passing, review approval, and a check for security risks and dependency changes. If AI-generated code is involved, require a short human-written note on what changed, why, and how it was verified.

- Make review rules explicit. Treat AI output like a junior draft, with reviewers checking correctness, edge cases, failure modes, and whether the change fits the system design. Large language models can produce clean-looking code that quietly violates assumptions, so your process has to catch that.

- Keep an audit trail when you’re subject to regulation. Log prompts/outputs where possible, keep PR history clean, and store the evidence that matters, like test results, approvals, and the reasoning behind risk decisions. In finance, healthcare, automotive, aerospace, that level of traceability is often required before a release can move ahead.

Companies that want to move from experiments to production usually require more than just coding tools. They must establish clear adoption rules, validation flows, and a practical AI development strategy that fits real engineering work.

Measure impact honestly

If you only track how fast code gets written, you’ll miss the bill that shows up later. Measure the whole pipeline, including the cost of cleaning up mistakes.

- Cycle time. How long from idea to production (expect it to drop if things go well).

- Defect rate. Bugs found in production or after release.

- Incident rate. How often things break and need fixes.

- Time spent in review or fixing regressions. If this number starts rising, it usually means low-quality changes are getting through too early or AI-generated output needs too much cleanup.

- Rollback frequency. Frequent rollbacks usually show that testing and review did not catch issues before deployment.

Plan your workforce

Most companies don’t need fewer humans, as they need people to stop treating code like a fire-and-forget exercise. The real change hits in how we handle verification, especially for juniors who are just starting out.

- Train juniors around verification. Teach them to write tests that catch regressions, read diffs with suspicion, reproduce issues locally, and prove a change is safe with small experiments (flags, staged rollouts, monitoring).

- Pair AI with seniors in production-critical areas. Novices often miss subtle errors, so AI output still needs experienced review when code quality and reliability matter. Seniors set constraints, define acceptance criteria, and block risky shortcuts.

- Update role expectations. Routine ticket delivery cannot be the whole job anymore. The juniors who grow fastest are the ones who can validate AI-generated changes, understand failure modes, and communicate trade-offs.

Conclusion

Will AI replace programmers by 2030? If you think of being replaced by AI as task displacement first, you can already get the answer. Routine coding and maintenance work is getting cheaper, faster, and easier to draft, so the entry-level path built around small implementation tasks is under pressure.

Yet the work that keeps software development alive in the real world doesn’t shrink the same way. Production still punishes sloppy assumptions. And someone must define what correct looks like. These specialists keep the contracts intact and watch for regressions in the review. When the code ships, the responsibility for the risk is also theirs.

For engineers, that means they need to move up their stack toward system design, testing strategy, observability, and security basics, then use AI-assisted tools as acceleration, not as a substitute for judgment. For companies, it means they must set clear boundaries and measure outcomes like defect rates instead of celebrating raw output.

This shift ensures the future will look less like AI replacing software engineers and more like the same job performed under a higher standard.

Comments